|

A function may not be equal to its Taylor series, even if its Taylor series converges at every point. The Taylor series of a function is the limit of that function's Taylor polynomials as the degree increases, provided that the limit exists.

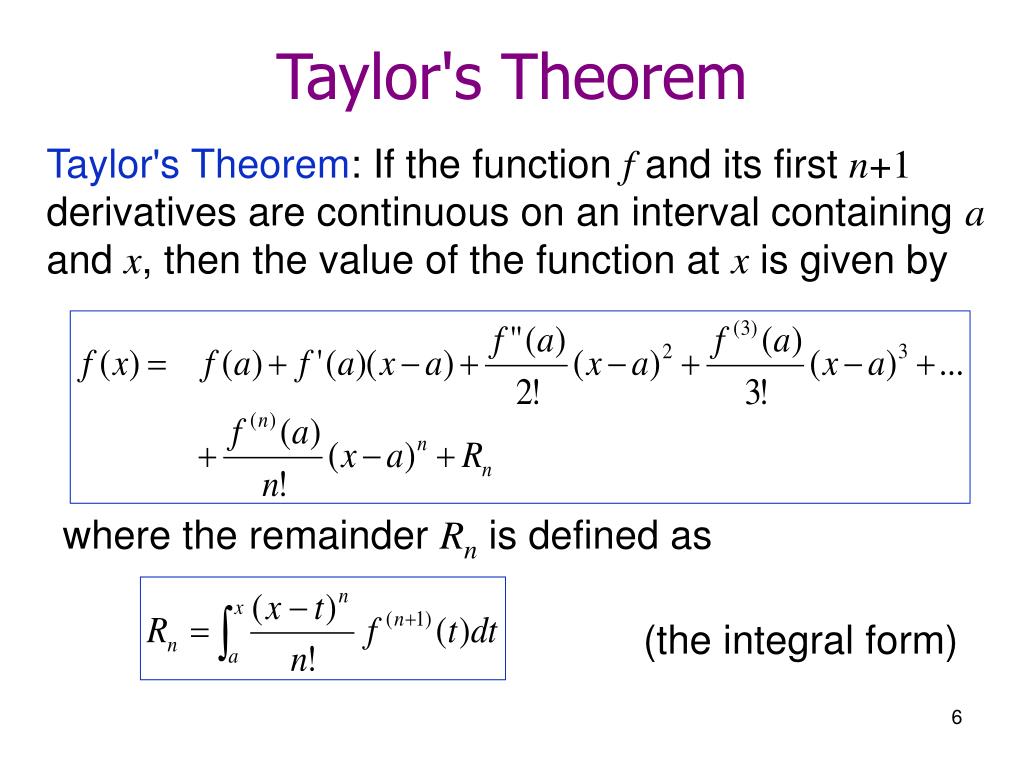

The polynomial formed by taking some initial terms of the Taylor series is called a Taylor polynomial. Taylor's theorem gives quantitative estimates on the error introduced by the use of such an approximation. If the Taylor series is centered at zero, then that series is also called a Maclaurin series, named after the Scottish mathematician Colin Maclaurin, who made extensive use of this special case of Taylor series in the 18th century.Ī function can be approximated by using a finite number of terms of its Taylor series. The concept of a Taylor series was formulated by the Scottish mathematician James Gregory and formally introduced by the English mathematician Brook Taylor in 1715. In mathematics, a Taylor series is a representation of a function as an infinite sum of terms that are calculated from the values of the function's derivatives at a single point.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed